GenAI voicebots on AWS: architecture and deployment

Learn how voice AI works, how it differs from chatbots, and how to deploy voicebots on AWS using both managed and custom architectures.

Amazon Connect

Amazon Bedrock

Amazon Polly

Amazon Lex

Amazon Transcribe

LangGraph

If your business involves phone calls with customers, partners, or citizens, there’s likely room for a voicebot in your organization. Not the old-school robocalls with pre-recorded messages that everyone dreads — we’re talking about intelligent, conversational AI that understands context, handles unexpected questions, and responds naturally in real time.

At Chaos Gears, phone-based voicebots on AWS are one of our core focus areas — bots that answer incoming calls and proactively make outbound ones over real phone networks. We’ve deployed them across healthcare, finance, insurance, and telecom. In this post, we’ll walk you through how voicebots actually work and how to build them on AWS.

From chatbot to voicebot: it’s simpler than you think

To understand voicebots, start with chatbots. The architecture you already know — a user sends text input, an AI system interprets the intent, calls backend tools (databases, APIs, CRMs), and returns a text response — is the foundation.

Chatbot architecture — user → AI services → tools → response

A voicebot is essentially this same architecture with two additional layers wrapped around it:

Voicebot ≈ Chatbot + STT + TTS

Speech-to-Text (STT) converts the caller’s spoken words into text that the AI system can process. Text-to-Speech (TTS) converts the AI’s text response back into a natural-sounding voice. The core AI logic, dialog management, and business integrations remain the same.

Voicebot architecture — User (voice) → speech to text → AI system → tools → text To speech → user (voice)

This means that if you already have a working chatbot, adding voice capabilities is more straightforward than building from scratch. You’re leveraging the same backend architecture, tools, and business rules.

There’s also a second approach emerging: speech-to-speech models like Amazon Nova Sonic that handle voice input and output directly, without separate STT and TTS steps. It’s promising, but language support is still limited — most of our clients operate in Polish, which ruled it out for our use cases. We focus on the STT + Chatbot + TTS architecture because it works reliably across languages today.

Where voicebots differ from chatbots (and why it matters)

While the architecture is similar, voicebots have much stricter operational requirements. Three areas demand special attention.

- Latency. In a chatbot, users tolerate 2–3 seconds of delay — they see a typing indicator and know something is happening. In a voicebot, silence after 1–2 seconds feels awkward and broken. Every millisecond counts, and this drives specific infrastructure choices: faster processing, optimized pipelines, and careful service selection.

- Error handling. Chatbot users can scroll back and re-read messages. Voicebot users can’t easily re-listen to previous responses. You must be crystal clear the first time. This requires better STT accuracy, more robust fallback strategies, and confirmation mechanisms at key points in the conversation.

- User experience. Chatbots have visual elements — buttons, menus, images — and users control the pace. Voicebots are purely auditory. You can’t show 10 menu options; you must keep choices simple and memorable. The conversation design must feel like you’re talking to a real person. This fundamentally changes how you design prompts, responses, and conversation flows.

Real-world applications: inbound and outbound

Voicebots operate in two modes, and understanding both helps identify opportunities in your organization.

Inbound is when a customer calls your business and the voicebot answers. Instead of navigating a traditional button-based IVR menu, the caller has an intelligent conversation. Think insurance claim filing, hotel booking modifications, retail order tracking, or telecom troubleshooting — routine, high-volume calls that voicebots handle efficiently around the clock.

Outbound is when the voicebot proactively calls customers on behalf of your business. A hospital calling patients with appointment reminders. A dealership notifying owners about vehicle recalls. A utility company informing customers about planned maintenance. These are personalized, conversational calls — not robocalls. The voicebot can ask questions, handle responses, and update information based on what the caller says.

Both patterns reduce wait times, operate 24/7, handle high call volumes, and free up human agents for cases that truly require empathy and complex problem-solving.

Applicable across industries

The common thread across voicebot use cases is high volumes of repetitive phone interactions — and that’s nearly every industry. In healthcare, voicebots conduct preliminary patient interviews and schedule appointments. In finance and insurance, they handle account inquiries and claims intake. Tourism and hospitality use them for booking modifications and reservation confirmations. Retail deploys them for order tracking and returns. Telecom companies guide callers through troubleshooting. Education, utilities, automotive, public services — the list goes on.

If your organization handles phone calls at any meaningful scale, there’s almost certainly a voicebot opportunity worth exploring.

How to deploy voicebots on AWS

Every phone-based voicebot solution has three main building blocks.

High-level architecture — User ↔ Telecom Provider ↔ Call Management ↔ Voicebot

1. Telecom Provider

This is your gateway to the phone network — it handles phone connectivity (landline, mobile, VoIP), phone numbers, call lifecycle management, audio streaming, scalability across concurrent calls, and regulatory compliance.

For AWS, the primary option is Amazon Connect — a managed contact center service originally built for Amazon’s own customer service. It provides phone numbers in 60+ countries, handles inbound and outbound calling, scales automatically, and integrates natively with other AWS services. For simple Lex-based bots, the integration is straightforward. However, for custom voicebots with sophisticated conversational AI, connecting through Amazon Connect requires some technical tricks and custom integration work to get audio streaming working properly.

Third-party providers like Twilio and Telnyx are also viable options. They offer mature platforms with robust APIs, broader international coverage in certain regions, and can be more flexible for complex telephony requirements.

The decision depends on your existing infrastructure, geographic and compliance requirements, whether you want everything in AWS or prefer a hybrid approach, and your cost considerations.

2. Call Management

This orchestration layer sits between the Telecom Provider and the VoiceBot. It handles call routing, outbound call scheduling, integration between layers, real-time monitoring, and artifacts storage (recordings, transcripts).

You have two approaches here:

Managed: Amazon Connect handles most orchestration out-of-the-box — routing, campaign management, analytics, and storage. This works well for Lex-based voicebots with straightforward flows. You configure rather than code.

Custom serverless: Build your own Call Management layer using services like Amazon API Gateway, AWS Lambda, and Amazon DynamoDB (plus Amazon S3 for recordings, Amazon EventBridge for scheduling, Amazon CloudWatch for monitoring). This is just a backend serverless application with pure orchestration logic — no AI components. It’s required when you need full control over audio streaming, session management, and complex orchestration logic.

3. VoiceBot (the AI brain)

This is where the intelligence lives. It handles speech processing (STT and TTS), conversational intelligence (NLU, dialog management, response generation), action execution (calling backend APIs, booking appointments, updating records), communication with other modules, and context management across the entire call.

Approach A: Managed (Connect + Lex)

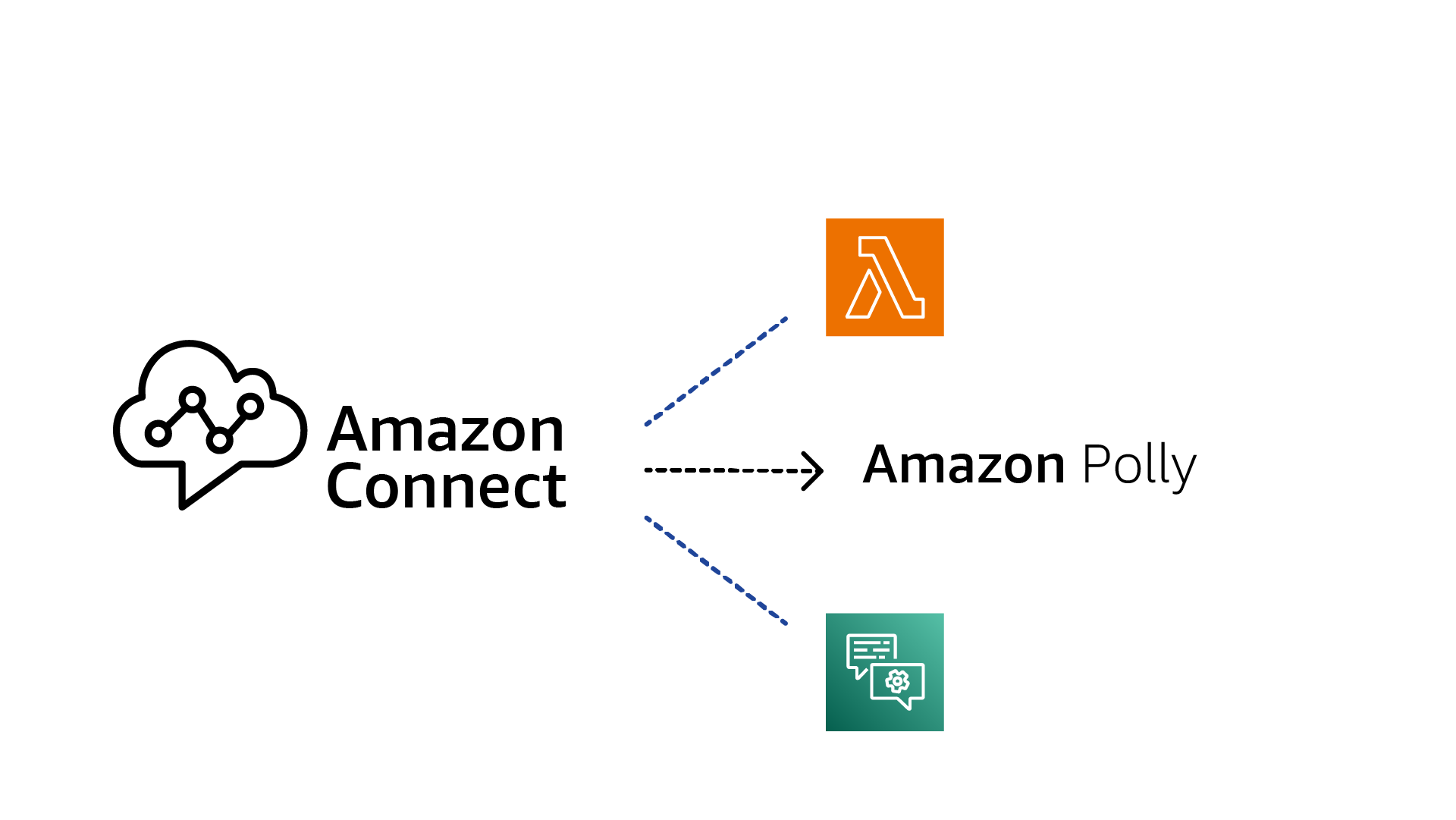

The most straightforward way to get started. Amazon Connect handles telephony, Amazon Lex handles conversational AI with intent recognition and dialog management, AWS Lambda functions execute business logic, and Amazon Polly converts responses to speech.

Managed approach: Connect + Lex + Polly + Lambda

This approach offers lower technical complexity, faster time to market, and fully managed scaling. It’s great for structured conversations with well-defined intents — appointment scheduling, account inquiries, order status. The limitation: less flexibility for complex, open-ended conversations and harder to implement sophisticated agent-based reasoning.

Approach B: Custom (AWS Fargate + Pipecat + LangGraph)

Custom approach: Fargate + Pipecat + LangGraph + Transcribe + Bedrock + Polly + OpenSearch

This is our architecture for sophisticated use cases requiring maximum flexibility. The Telecom Provider connects calls via WebSockets for real-time, bidirectional, low-latency audio streaming. AWS Fargate runs our custom application as serverless containers. We use Pipecat, an open-source framework designed for voice AI applications (audio streaming, timing, voice interaction patterns), combined with LangGraph, an agent framework that orchestrates complex conversational flows with foundation models.

On the AI services side: Amazon Transcribe handles real-time speech-to-text, Amazon Bedrock provides foundation models for conversational intelligence (the LangGraph agent orchestrates all interactions), Amazon Polly generates natural speech output, and Amazon OpenSearch stores conversation history and knowledge base for retrieval.

This approach shines when you need agent-based conversations that go beyond simple slot-filling, tight control over the conversational experience, and complex integrations. The tradeoff is more development effort and deeper technical expertise.

How to choose: Start with Amazon Connect + Amazon Lex if your use cases are straightforward. Move to the custom architecture when you need conversational sophistication that managed services can’t easily support.

Understanding the architecture is one thing — running it in production is another. In the second part of this series, we share five lessons that cost us the most time and saved our clients the most pain.